Standard scraping tools can struggle when handling dynamic content. At this point, you need some of the best open-source JavaScript web scraping tools, which are specifically designed to interact with web elements dynamically, mimicking user actions more effectively.

This article explores some of the top open-source JavaScript tools and frameworks for web scraping, their standout features, benefits, and the challenges you might face while using them.

Let’s begin.

Best Open-Source JavaScript Web Scraping Tools: A Comparison Chart

Here is a list and basic overview of the best open-source JavaScript web scraping tools and frameworks, which will be discussed in detail later in this article.

- Puppeteer

- Playwright

- Cheerio

- Crawlee

- Crawler

|

Features/Tools |

GitHub Stars | GitHub Forks | GitHub Open Issues | Last Updated | Documentation |

License |

| Puppeteer | 89.9k | 9.2k | 255 | March 2025 | Excellent | Apache-2.0 |

| Playwright | 69.8k | 3.9k | 724 | March 2025 | Good | Apache-2.0 |

| Cheerio | 29.1k | 1.7k | 28 | March 2025 | Good | MIT |

| Crawlee | 17k | 762 | 134 | March 2025 | Excellent | Apache-2.0 |

| Crawler | 6.7k | 878 | 29 | December 2024 | Good | MIT |

Note: Data as on March 2025

Interested in enhancing your web scraping skills? Then check out our article on Best Open-Source Web Scraping Tools and Frameworks for faster, wiser, and more efficient data extraction.

Puppeteer

Puppeteer is a Node.js library that provides a powerful yet simple API for controlling headless browsers. Initially designed for Chrome, Puppeteer now supports both Chrome and Firefox.

A headless browser can send and receive requests but has no GUI. It works in the background, performing actions as instructed by an API.

With Puppeteer, you can simulate user interactions, including typing, clicking, and navigation. This makes it ideal for web scraping, automated testing, and server-side rendering.

Puppeteer allows you to generate PDFs, monitor site performance, and inspect how browsers render URLs—all without needing a visible UI.

Requires Version – Node v10.18.1 or greater

Available Selectors – CSS

Available Data Formats – JSON

Pros

- Full-featured API that covers most automation and scraping use cases

- Now supports both Chrome and Firefox, expanding its flexibility

- Ideal for JavaScript-heavy websites

Cons

- It is still primarily optimized for Chromium-based browsers

- Supports only JSON format for data extraction

Installation

To install Puppeteer in your project, run the following:

npm i puppeteerThis will install Puppeteer and download the recent version of the browser to run the Puppeteer code. By default, Puppeteer works with the Chromium browser, but you can also use Chrome.

You can also use the lightweight version of Puppeteer – puppeteer core. To install, type the command:

npm i puppeteer coreYou can configure puppeteer core to work with an installed version of Chrome or Firefox.

Best Use Case

- To scrape dynamic JavaScript-based websites

- To automate UI testing for Chrome and Firefox

- To capture website screenshots and generate PDFs

- To monitor web performance and rendering

Is web scraping the right choice for you?

Hop on a free call with our experts to gauge how web scraping can benefit your business

Playwright

Playwright is a Node.js library that automates multiple browsers using a single API. It enables reliable, fast, and evergreen cross-browser automation, supporting Chromium, WebKit, and Firefox.

Designed to improve UI testing, Playwright eliminates flakiness, accelerates execution speed, and provides deep insights into browser behavior.

Its browser context feature allows the simulation of multiple devices or user sessions within a single browser instance, making testing more efficient.

Requires Version – Node.js version 14 or above

Available Selectors – CSS

Available Data Formats – JSON

Pros

- Cross-browser support (Chromium, WebKit, and Firefox)

- Detailed and comprehensive documentation

Con

- They have only patched the WebKit and Firefox debugging protocols, not the actual rendering engine

Installation

To install the package:

npm i -D playwright

This command installs Playwright along with browser binaries for Chromium, Firefox, and WebKit. Once installed, you can use Playwright in a Node.js script to automate web browser interactions.

Best Use Case

If you need an efficient tool to perform UI testing across multiple browsers, you should use Playwright.

Cheerio

Cheerio is a fast and flexible library that parses raw HTML and XML documents in Node.js environments. It implements a subset of core jQuery, providing a familiar syntax for those accustomed to jQuery.

With Cheerio, you can write filter functions to fine-tune which data you want from your selectors. If you are writing a web scraper in JavaScript, Cheerio API is a fast option that makes parsing, manipulating, and rendering efficient.

Requirements – Up-to-date versions of Node.js and npm

Available Selectors – CSS

Pros

- Parsing, rendering, and manipulating documents is very efficient

- Flexible, easy to use

- Very fast

Con

- Less suitable for scraping dynamic websites that rely on JavaScript for content rendering

Installation

To install the required modules using npm, type the following command:

npm install cheerioBest Use Case

If you need speed and efficiency, go for Cheerio.

Go the hassle-free route with ScrapeHero

Why worry about expensive infrastructure, resource allocation and complex websites when ScrapeHero can scrape for you at a fraction of the cost?

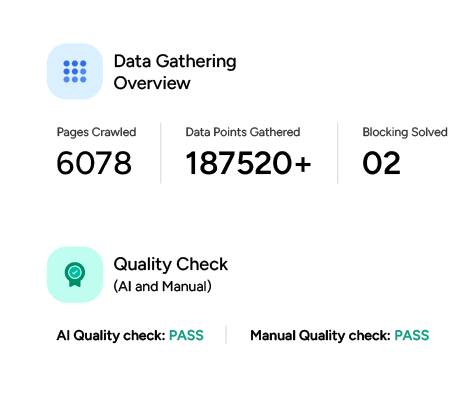

Crawlee

Crawlee, is the successor to Apify SDK which is a Node.js library and positions itself as a universal web scraping library in JavaScript, with support for Puppeteer, Playwright, Cheerio, JSDOM, and raw HTTP requests.

Crawlee is built in TypeScript, which enhances code completion and type safety. It also offers anti-blocking features such as automatic proxy rotation and session management, and provides a familiar interface for users transitioning from the Apify SDK.

Requirements – Crawlee requires Node.js 16 or higher

Available Selectors – CSS selectors for DOM traversal and manipulation when working with HTML content

Available Data Formats – JSON, JSONL, CSV, XML, HTML, and others. Excel is not natively supported

Pros

- Runs on Node.js and it’s built in TypeScript to improve code completion

- Automatic scaling and proxy management

- Mimic browser headers and TLS fingerprints

Cons

- Advanced configurations may require a deeper understanding of the system

- The interface features may be difficult for new users

Installation

To install Crawlee in your Node.js project, run:

npm install crawleeBest Use Case

Crawlee is an excellent choice if you are looking for a rich developer experience, anti-blocking features, and seamless integration.

Crawler

Crawler is a popular web crawler for NodeJS, making it a speedy crawling solution. If you prefer coding in JavaScript or you are primarily dealing with a JavaScript project, Crawler will be the most suitable web crawler.

Its installation is also pretty simple. JSDOM and Cheerio (used for HTML parsing) use it for server-side rendering, with JSDOM being more robust.

Requires Version – Node v4.0.0 or greater

Available Selectors – CSS, XPath

Available Data Formats – CSV, JSON, XML

Pros

- Easy installation

Con

- It does not natively support Promises and relies on callback functions instead

Installation

To install this package with npm:

npm install CrawlerBest Use Case

If you need a lightweight web crawler that combines efficiency and convenience.

Why You Need ScrapeHero Web Scraping Service

Open-source JavaScript web scraping tools can offer you flexibility and control. However, you must note that they also come with challenges like managing dynamic content and CAPTCHAs.

All these challenges require technical expertise and continuous maintenance. A wiser option is to rely on a web scraping service like ScrapeHero for scalable, hassle-free data extraction.

We can provide you with accurate, high-volume data without the complexities of maintaining scrapers yourself, all while ensuring compliance with legal and privacy regulations.

Frequently Asked Questions

JavaScript web scraping frameworks are libraries that help you to automate web scraping from websites. Some popular options for JavaScript web scraping frameworks may include Puppeteer, Playwright, and Crawlee.

Some of the best JavaScript web scraping tools in 2025 are Puppeteer, Playwright, Cheerio, and Crawlee.

Open-source JavaScript web scraping tools are cost-effective, flexible, and customizable.